Magical Scepter

A handheld “magic scepter” built on an Arduino Nano ESP32 that turns motion gestures into spells—synchronized WS2812 LED-ring effects, embedded SFX, and ElevenLabs text-to-speech—while hosting a WiFi web UI for configuration, spell management, and real-time monitoring.

A compact ESP32‑S3 build tuned for stable, real-time motion sensing and synchronized audio/visual feedback.

Gestures are recorded from the web UI, downsampled + normalized into fixed-length templates, and stored in flash. Runtime recognition uses Dynamic Time Warping (DTW), confidence thresholds, cooldowns, and optional combo sequences—then triggers LED overrides plus SFX → TTS audio. Spells can also fire “real world” actions: DIY effects, lights, and other home automations.

The scepter runs a built-in HTTP server over WiFi and advertises itself via mDNS as scepter.local. The interface covers spell CRUD + testing, volume/voice tuning, voice assistant configuration (OpenAI API), LED parameters, IMU calibration, WiFi management, and live status + orientation visualization.

On boot the ESP32 brings up WiFi + the HTTP server, loads stored spells/settings from flash, then runs three loops in parallel: IMU sampling + DTW matching, FastLED ring animation, and an I2S audio pipeline that sequences SFX → ElevenLabs TTS. Voice input can be routed to a configurable OpenAI-backed assistant without blocking gesture recognition.

Overview

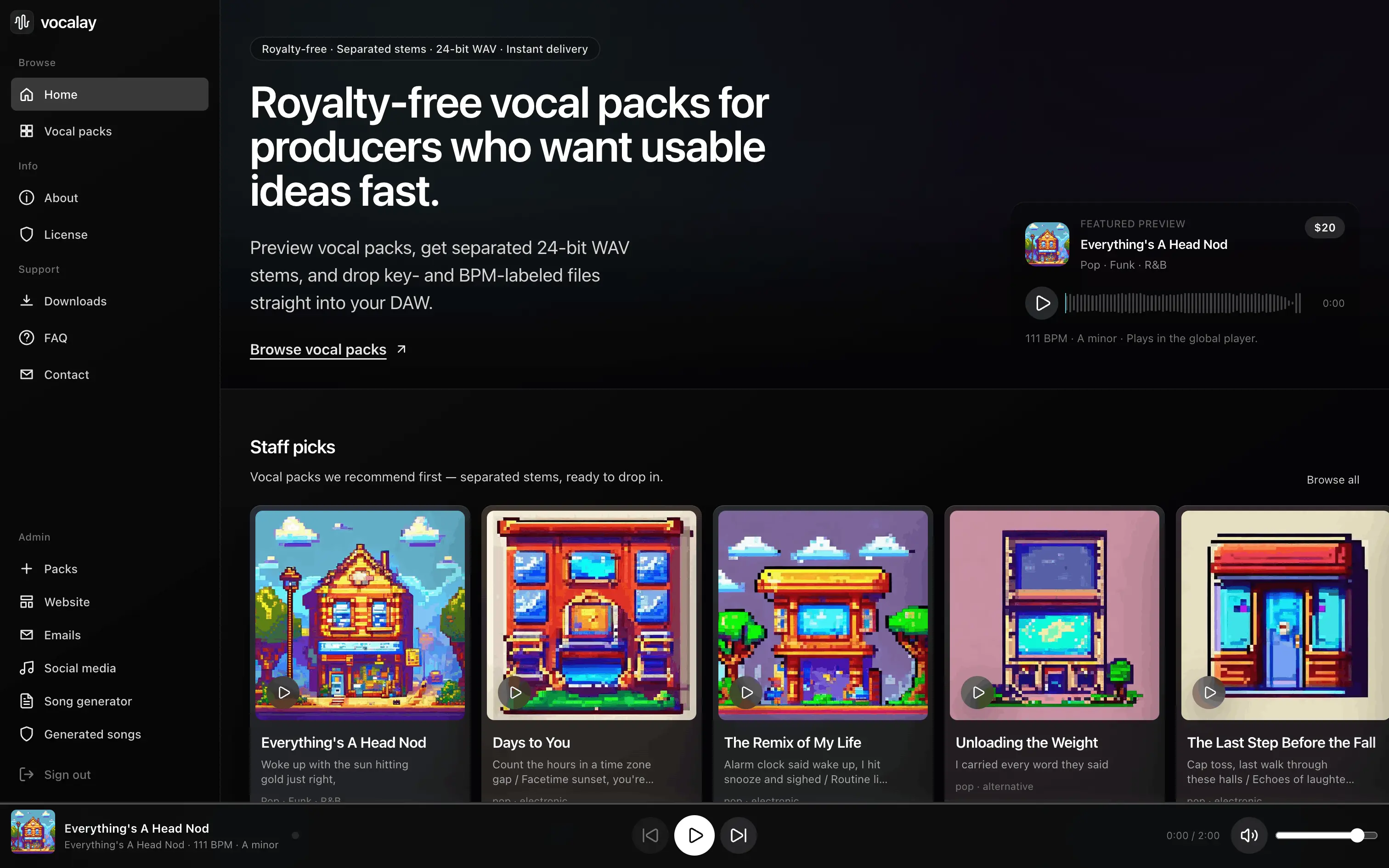

A complete pipeline for producing and selling AI-generated vocal sample packs. A 3B parameter music model was fine-tuned on curated tracks, paired with a generation and post-production workflow to produce vocals at scale. Each pack contains a full vocal track with separated stems, BPM/key metadata, and word-level lyric timestamps.

Reverse-engineered the inference pipeline and built a full training stack from scratch. Frozen embeddings, gradient checkpointing, 8-bit AdamW, and decoder loss skipping brought VRAM from 40GB+ down to 22GB — trainable on a single RTX 3090 in ~20 hours for 30k steps.

Python API serving the fine-tuned model with supporting post-production tasks: lyrics via LLM tool calling, stem separation (Demucs), BPM/key detection, word-level timestamps (Whisper), and cover art (SD 1.5). Includes a creative seeds system for varied output and reference audio conditioning via MuQ embeddings.

Next.js web app with streaming lyrics generation, reference audio library, batch mode, draft management with inline playback, on-demand stem separation, and a release workflow with auto-generated cover art. Stripe for payments, Neon PostgreSQL for data, Vercel Blob for released audio.

Overview

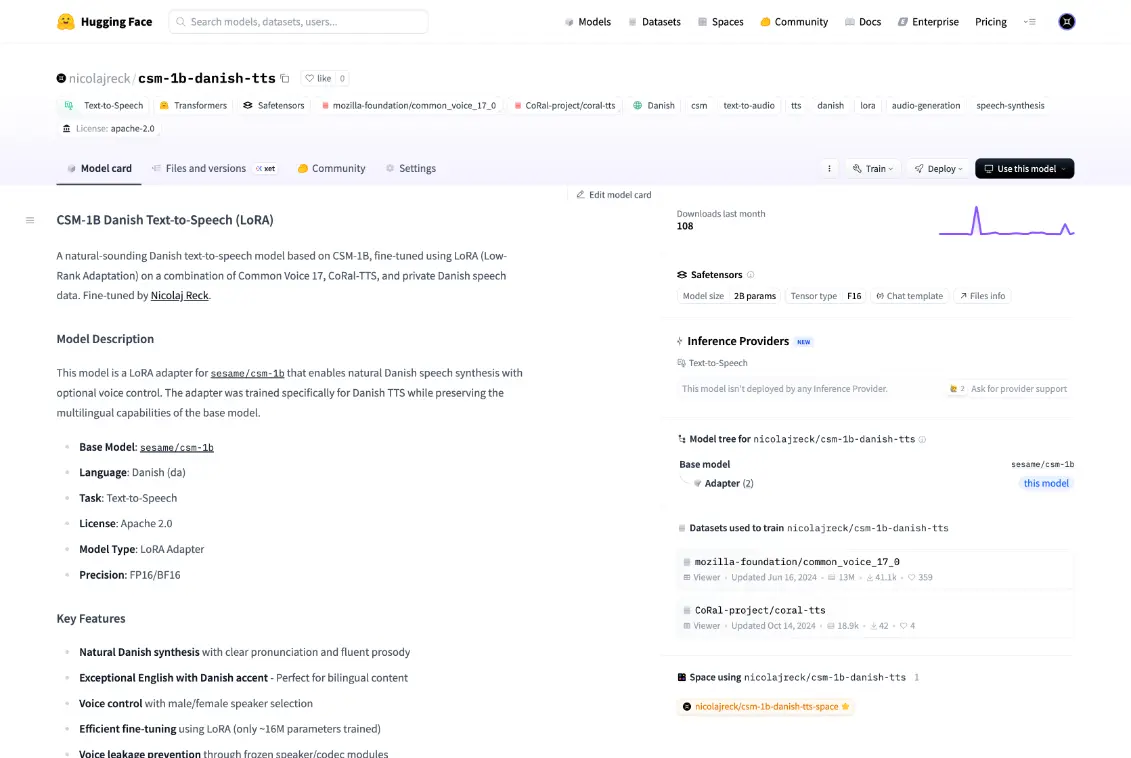

A Danish text-to-speech adapter built on top of sesame/csm-1b. The work here is making Danish sound right: cleaner pronunciation, better pacing, and fewer “weird” drops in longer sentences.

Trained as a LoRA adapter on a mixed Danish dataset (public corpora + a private extension). Data is filtered/normalized and text is cleaned to keep training stable and output consistent. The setup also supports two voice presets controlled from the prompt.

Packaged with a simple demo and curated audio samples, so it’s easy to test quickly and share results. Released under Apache-2.0 and requires access to the base model.

Overview

The platform is built for teams drowning in documents. It takes messy inputs and turns them into structured knowledge you can query — with answers that stay tied to the original source.

Upload or connect sources, then store everything in a versioned, consistent format: pages, text blocks, tables, and metadata. OCR is used when needed — but the goal is reliable structure, not just raw text.

Pull out the fields that matter using repeatable templates per document type. Tables, entities, key values, and references are captured with confidence + review hooks, so you can scale without losing control.

Hybrid search (keyword + semantic) plus Q/A that cites the exact pages/regions it used. Fast answers are nice — trustworthy answers with traceability are the point.

Event-driven pipelines for classification, routing, approvals, and exports. Add policy checks, sensitive-data handling, and integrations to downstream systems without rewriting the core.

Overview

A practical grocery assistant built around Rema1000’s full catalog. Plan meals, generate structured lists, match ingredients to real products with prices, and ask questions with full-inventory context.

Suggests meals from constraints and converts everything into a structured shopping list with quantities — optionally split per meal.

Maps ingredients to actual products with prices, handling synonyms, packaging sizes, and sensible alternatives so the basket matches intent.

Ask about anything in the catalog: comparisons, alternatives, best value picks, or how to build a basket for a recipe.

Upload an image and get it converted into a recipe and product basket. You can open the matched products and buy them directly on the B2C platform.

Built to finish fast: review, swap items, then move into a basket you can actually purchase — not just a text list.

Overview

The UI Studio is my go-to system for building products fast without the UI drifting over time. It’s a full kit: foundations → components → real layouts, all designed to work together.

Tokens and rules that keep everything consistent: type scale, spacing, radii, shadows, colors, and layout grid. This is the part that makes the rest plug-and-play.

A component library built around real product needs: navigation, forms, tables, cards, modals, empty states, and feedback. Variants are kept tight so it stays usable.

Not just atoms. The kit includes real page sections for marketing and product UI — so you can assemble pages like LEGO and still end up with something coherent.

Designed for handoff and scale: clear structure, documentation where it matters, and a system that stays maintainable when more people touch it.

Overview

A Figma plugin that builds interfaces using The UI Studio design system. The goal is speed without drift: consistent layout, consistent tokens, and zero time wasted on starting from an empty frame.

Drops in full page sections and assembles them into a coherent layout. This includes real structure — not just a stack of random components.

Applies spacing, typography, and color theme from the system so the output stays consistent. It generates UI that already follows the rules.

Adjusts copy placeholders and images so you get something presentable right away — usable for demos, flows, and quick iterations.

Pick a page type, choose sections, apply a theme, and generate. From there it’s normal Figma: tweak, swap, and ship — without rebuilding the same structure every time.

Overview

A free avatar library made for product UI. The goal is simple: stop shipping ugly placeholders, and keep avatars consistent across the product.

A large set of avatars with a clean baseline style — meant to cover the common “we need avatars everywhere” problem without custom illustration work.

Quick customization where it matters: color + shape options, so the avatars can match the UI and still feel intentional.

Exports are built for UI: transparent assets you can drop straight into dashboards, prototypes, and decks.

Works directly where designers are: instant access in Figma, plus downloads for other popular design tools.

Commercially free — built to be used in real products without license friction.